The past two weeks I have been operating on terrain, and for two months or so prior to that I have (at irregular intervals) been researching and preparing this operate. Now ultimately the geometry-generation portion of the terrain code is as excellent as completed.

The 1st issue I had to decide was what sort of approach to use. There are tons of methods to deal with terrain and a lot of papers/literature on it. I have some tips on what the super secret project will need in terms of terrain, but nevertheless wanted to to maintain it as open as achievable so that the tech I produced now would not turn into unusable later on. Because of this I required to use some thing that felt customizable and scalable, and be able to fit the needs that might arise in the future.

Creating vertices

What I decided on was a an updated version of geomipmapping. My main resources was the original paper from 2000 (located here) and the terrain paper for the Frostbite Engine that energy Battlefield: Undesirable Firm (see presentation here). Basically, the method works by possessing a heightmap of the terrain and then produce all geometry on the GPU. This limits the game to Shader Model three cards (for NVIDIA at least, ATI only has it in Shader model four cards in OpenGL) as the height map texture requirements to be accessed in the vertex shader. This means fewer cards will be capable to play the game, but considering that we will not release until two years or so from now that ought to not be much of a dilemma. Also, it would be achievable to add a version that precomputes the geometry if it was genuinely required.

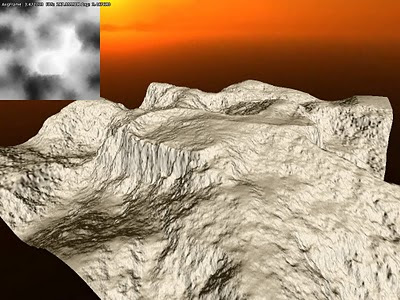

The very good thing about undertaking geomipmapping on the GPUis that it is extremely simple to vary the quantity of detail utilised and it saves a lot of memory (the heightmap requires about about a 1/ten of what the vertex information does). Prior to I go into the geomipmapping algorithm, I will very first discuss how to create the actual data. Essentially, what you do is render one or many vertex grids that read from the heightmap and then offset the y-coordinate for every single vertex. The regular is also generated by taking 4 height samples around current heightmap texel. Right here is what it looks in in the G-buffer when normal and depth are generated from a heightmap (which is also integrated in the image):

Given that I spent some time with figuring out regular generation algorithm, right here is some explaination on that. The fundamental algorithm is as follows:

h0 = height(x+1, z)

h1 = height(x-1, z)

h2 = height(x, z+1)

h3 = height(x, z+1)

normal = normalize(h1-h0, two * height_texel_ratio, h3-h2)

What occurs here is that the slope is calculated along the x-axis and then z-axis. Slope is defined by:

dx= (h1-h0) / (x1-x0)

or put in words, the distinction in height divided by the distinction in length. But because the distance is usually two units for both the x and z, slope we can skip this division and merely just go with the difference in height. Now for the y-component, which we wants to be 1 when both slopes are and then steadily decrease as the other slopes get greater. For this algorithm we set it to two although considering that we want to get the rid of the division with two (which means multiplying all axes by two). But a issue remains, and that is that actual height worth is not always in the exact same units as the heightmap texels spacing. To fix this, we need to have to add a multiplier to the y-axis, which is calculated like this:

height_texel_ratio = max_height / unit_size

I save the heightmap in a normalized type, which implies all values are in between 1-, and max_height is what each value is multiplied with when calculating the vertex y-value. The unitsize variable is what a texel represent in globe space.

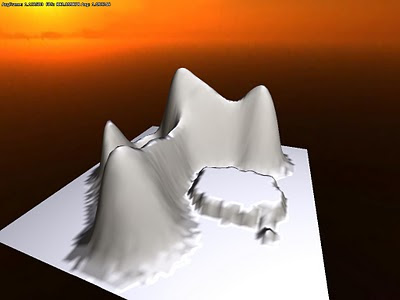

This algorithm is not that precise as it does not not take into account the diagonal slopes and such. It performs pretty good though and provides good results. Here is how it appears when it is shaded:

Note that here are some bumpy surfaces at the base the hills. The is since of precision concerns in the heightmap I was making use of (only used 8bits in the first tests) and is something I will get back to.

Geomipmapping

The basic algorithm is pretty easy and is fundamentally that the longer a element of the terrain is from the camera, the less vertices are utilized the render it. This operates by having a single grid mesh, named patch, that is drawn several occasions, each time reperesenting a different component of the terrain. When a terrain patch is near the camera, there is a 1:1 vertex-to-texel coverage ratio, which means that the grid covers a little element of the terrain in the highest possible resolution. Then as patches gets additional away, the ratio gets smaller sized, and and grid covers a higher region but fewer vertices. So for really far away components of the atmosphere the ratio may be one thing like 1:128. The concept is that because the portion is so far off the details are not visible anyway and each ratio can be a referred to as a LOD-level.

The way this operates internally is that a quadtree represent different the various LOD-levels. The engine then traverse this tree and if a node is discovered beyond a specific distance from the camera then it is picked. The lowest level nodes, with the smallest vertex-to-pixel ratio, are always picked if no other parent node meet the distance requirement. In this fashion the world is built up each and every frame.

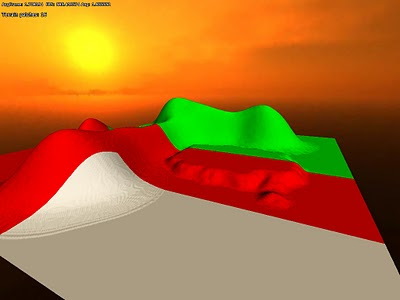

The dilemma is now to figure out what distance that a particular LOD-level is usable from and the original paper has some equations on how to do this. This is based on the alter in the height of the details, but I skipped obtaining such calculations and just let it be user set alternatively. This is how it appears in action:

White (grey) areas represent a 1:1 ratio, red 1:two and green 1:4. Now a dilemma emerges when making use of grids of various levels next to one particular an additional: You get t-junctions exactly where the grids meet (due to the fact exactly where the 1:1 patch has two grid quads, the 2:1 has only one particular) , resulting in visible seams. The repair this, there demands to be unique grid pieces in the intersections that generate a much better transition. The pieces look like this (for a 4x4 grid patch):

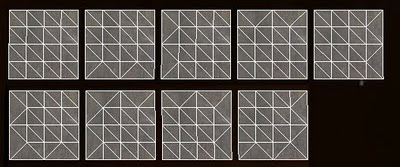

White (grey) areas represent a 1:1 ratio, red 1:two and green 1:4. Now a dilemma emerges when making use of grids of various levels next to one particular an additional: You get t-junctions exactly where the grids meet (due to the fact exactly where the 1:1 patch has two grid quads, the 2:1 has only one particular) , resulting in visible seams. The repair this, there demands to be unique grid pieces in the intersections that generate a much better transition. The pieces look like this (for a 4x4 grid patch): Although there are 16 border permutations in total, only 9 are required since of how the patches are generated from the quadtree. The exact same vertex buffer is employed for all of these sorts of patches, and only the index buffer is changed, saving some storage and speeding up rendering a bit (no switch of vertex buffer required).

Although there are 16 border permutations in total, only 9 are required since of how the patches are generated from the quadtree. The exact same vertex buffer is employed for all of these sorts of patches, and only the index buffer is changed, saving some storage and speeding up rendering a bit (no switch of vertex buffer required).The difficulty is now that there need to be a maximum of 1 in level distinction between patches. To make confident of this the distance checked, which I talked about earlier, demands to take this into account. This distance is calculated by taking the minimum distance from the previous level ( for lowest ratio) and add the diagonal of the AABB (where height is max height) from the prior level.

Enhancing precision

As pointed out before, I utilized a 8bit texture for height for the early tests. This offers quite lousy precision so I necessary to generate a single with larger bit depth. Also, older cards have to use a 32bit float shader in the vertex shader, so possessing this was essential in a number of approaches. To get hold of this texture I employed the demo version of GeoControl and generated a 32bit heightmap in a raw uncompressed format. Loading that into the code I currently had gave me this pretty picture:

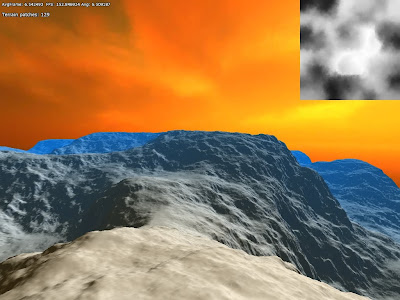

To test how the algorithm worked with larger draw distances, I scaled up the terrain to cover 1x1 km and added some fog:

To test how the algorithm worked with larger draw distances, I scaled up the terrain to cover 1x1 km and added some fog: The sky texture is not really fitting. But I consider this shows that the algorithm worked quite properly. Also note that I did no tweaking of the LOD-level distances or patch size, so it just changes LOD level as quickly as feasible and most likely renders more polygons due to the fact of the patch size.

The sky texture is not really fitting. But I consider this shows that the algorithm worked quite properly. Also note that I did no tweaking of the LOD-level distances or patch size, so it just changes LOD level as quickly as feasible and most likely renders more polygons due to the fact of the patch size.Next up I tried to pack the heightmap a bit since I did not want it to take up as well a lot disk space. Rather of writing some kind of custom algorithm, I went the simple route and packed the height data in the exact same manner as I do with depth in the renderer's G-buffer. The formula for this is:

r = height*256

g = fraction(r)*256

b = fraction(g)*256

This packs the normalized height worth into three bit colour channels. This 24 bit data gives fairly significantly all the accuracy necessary and for further disk compression I also saved it as png (which has non-lossy compression). It makes the heightmap information 50% smaller on disk and it looks the identical in game when unpacked:

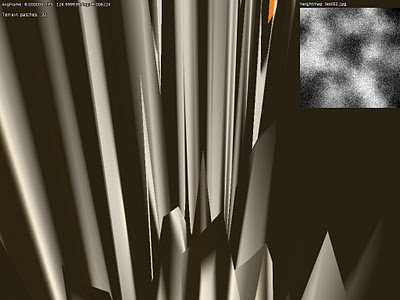

I also attempted to pack it as 16 bit, only employing R and B channel, which also looked fine. Nevertheless when I attempted saving the 24bit packed data as a jpeg (which makes use of lossy compresion) the result was much less than good:

I also attempted to pack it as 16 bit, only employing R and B channel, which also looked fine. Nevertheless when I attempted saving the 24bit packed data as a jpeg (which makes use of lossy compresion) the result was much less than good:

Final thoughts

There is a handful of bits left to fix on the geometry. For instance, there is some popping when altering LOD levels and this may well be lessened by using a gradual adjust rather. I initial want to see how this appears in game even though prior to obtaining into that. Some pre-processing could also be employed to mark patches of terrain that never need to have the LOD with highest detail and so on. Utilizing hardware tesselation would also be exciting to attempt out and it must aid add surfaces considerably smoother when close up.

These are things I will attempt later on though as right now the focus is to get all the fundamentals operating. Subsequent up will be some procedural content material generation employing perlin noise and that sort stuff!

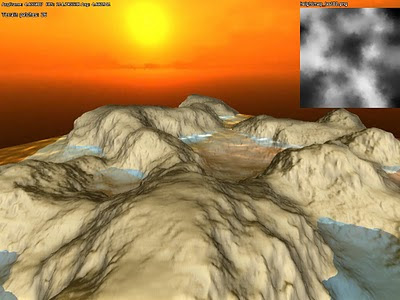

And finally I willl leave you with a screen container terrain, water and ssao:

No comments:

Post a Comment